HNSW Indexes and Custom Prompts!

Zep v0.13.0, released today, now includes support for HNSW indexes and the ability to set custom prompts for summarization tasks.

Hierarchical Navigable Small World (HNSW) indexes are faster and more accurate than IVFFLAT indexes previously used by Zep. Not only that, they are also simpler to maintain as they don't need a manual "indexing" (or training) step.

HNSW indexes

For new installs of Zep, Message vector search and document Collections use HNSW indexes by default.

For upgrades, only new document Collections will be indexed with HNSW.

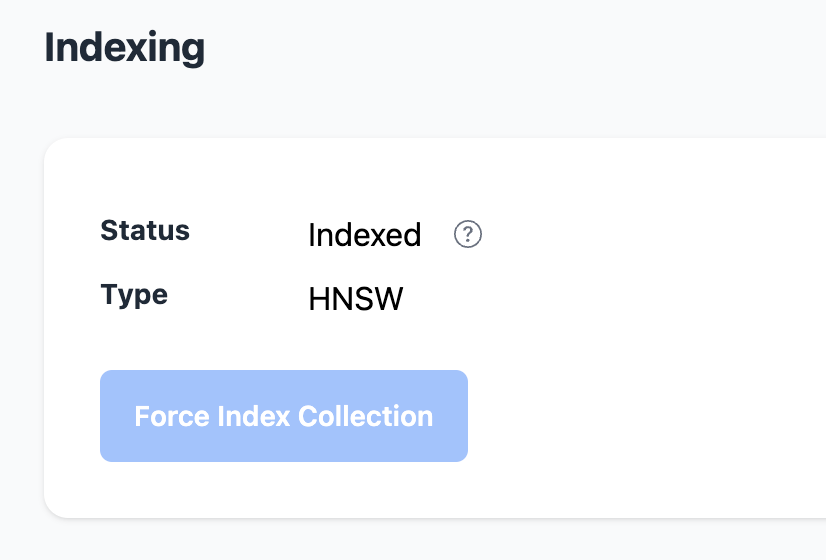

To determine the index type of a Collection, view the Collection's details in the Zep web interface or query the Zep API.

Query the Zep API for collection details (also available via the TypeScript SDK):

collection = client.document.get_collection(collection_name)Prerequisites to using HNSW indexes

To use HNSW indexes. you must ensure that the Postgres database back-end you use offers pgvector v0.5 or later. This is the case when installing using Zep's docker compose install, but cloud providers may only offer earlier versions of pgvector.

The Zep logs will display a message similar to the following when HNSW indexes are available:

INFO[2023-10-04T13:40:37-07:00] vector extension version is >= 0.5.0. hnsw indexing availableCustom prompts

Custom Summary Extractor prompts are now available! You can configure these in Zeps config.yaml file or your environment.

# OpenAI summarizer prompt configuration.

# Guidelines:

# - Include {{.PrevSummary}} and {{.MessagesJoined}} as template variables.

# - Provide a clear example within the prompt.

#

# Example format:

# openai: |

# <YOUR INSTRUCTIONS HERE>

# Example:

# <PROVIDE AN EXAMPLE>

# Current summary: {{.PrevSummary}}

# New lines of conversation: {{.MessagesJoined}}

# New summary:`

#

# If left empty, the default OpenAI summary prompt from zep/pkg/extractors/prompts.go will be used.

openai: |Custom prompts can be configured to provide summaries in the language of your users or to improve the existing prompts for your particular business.

When used alongside a local or open source LLM with an OpenAI compatible API, custom prompts can be used to enable summarization for models that operate dissimilar to OpenAI's GPT-family or Anthropic's Claude models.

Thanks to Brice Macias for contributing this feature!

To learn more, read the Custom Summary Extractor Prompts documentation.